Automating High-Resolution Imaging at Full Hadal Depth

A view of the southern subduction wall in the Western Pool of Challenger Deep, Mariana Trench as taken from inside the submersible DSV Limiting Factor on July 12, 2022. Pilot: CDR Victor Vescovo, USN (Ret.)

Hadal missions are different. Once you’re operating deeper 6,000m, you do not have the option to “just adjust it live.” You plan the mission, deploy, and the system has to do its job without supervision.

That is why autonomy matters more than almost any single spec at full ocean depth.

SubC Imaging’s Rayfin Trench 11km rated subsea camera designed for deep ocean landers, AUVs, and other untethered systems. The camera is built for high-quality imaging at depth, but the bigger shift is how it supports autonomous workflows. Instead of relying on custom coding or one-off scripting, teams can use a no-code script builder to automate capture, lighting, and sensor-triggered actions.

Autonomy in Hadal Imaging

Autonomy is not just “it can run unattended.” In a hadal deployment, autonomy usually means four practical things:

Capture only what you need so you do not waste storage or power during long descent and transit phases.

Coordinate the payload so the camera, lights, and any sensors run in sync.

Trigger actions from real conditions rather than guessing timing.

Support long duration missions using sleep and wake cycles.

The Rayfin Trench is designed around those realities.

No-Code Scripting That Fits Subsea Missions

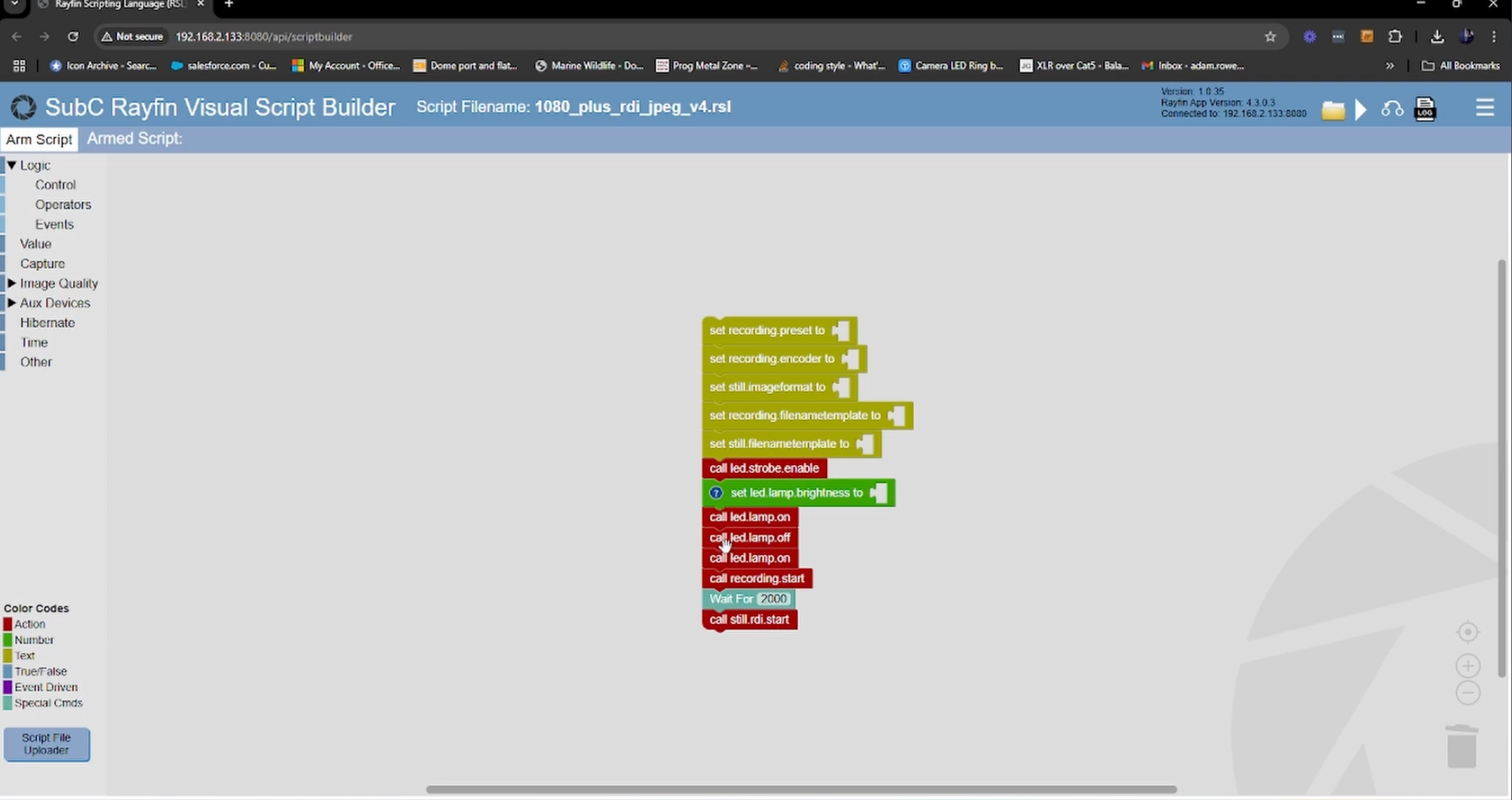

Example of no-code script.

A lot of autonomous systems technically support scripting, but the barrier is time and effort. Writing and testing scripts can be its own project.

The Rayfin Trench is built to reduce that friction. The no-code script builder is meant for visual, drag-and-drop logic that matches how subsea teams already think about missions.

That includes simple schedules, event-based triggers, and conditional actions like “only record when X happens” or “turn lights on only during capture.”

Event-Based Capture

At extreme depth, “record everything” is often the least efficient choice. It increases power draw, fills storage, and creates a big review workload after recovery.

Event-based capture flips the model. Instead of recording constantly, you define the conditions that make recording worth it.

The Rayfin Trench supports triggers using internal orientation sensing (tilt and roll) and external inputs like NMEA data. In practical terms, that can mean starting capture only when the lander is stable on the seabed, or only during a survey window when the vehicle is on task.

Coordinating Lights, Lasers, And Sensors

Autonomous imaging is not only about the camera. Lighting and other payload components are often the bigger power draw, and they are just as important to control.

The Rayfin Trench can be scripted to trigger and power external devices through its auxiliary ports, including high-depth lighting like Aquorea Trench LEDs, lasers, or third-party sensors. The point is simple: the payload runs as one system, not a collection of devices doing their own thing.

Hibernation And Wake Cycles For Long Deployments

For long-term monitoring and time-lapse, the limiting factor is usually power. Many deployments rely on a wake, capture, sleep pattern to extend mission life.

The Rayfin Trench supports an optional hibernation approach so capture windows can be scheduled, then the system can return to a low-power state between them. That design is especially useful for long deployments where recovery is expensive and infrequent.

Example Autonomous Mission Script for a Hadal Lander

Use case: Hadal lander deployment

Goal: Skip descent footage, capture only after touchdown and stabilization

Script logic (no-code):

Start with lights off

Wait until depth threshold is met

Wait 60 seconds for tilt/roll to stablize

Turn lights on (preset intensity)

Record video in 10-minute segments

Capture a still every 5 minutes

Stop at scheduled end time (or low-battery threshold)

That kind of script is simple, but it changes outcomes. You come back with more usable data and less wasted recording.

Learn More About How to Use a Visual Script Builder to Automate Underwater Data Capture

How Does This Help Teams Working in the Hadal Zone?

If you’re building missions at full ocean depth, the Rayfin Trench is meant to reduce the workload that usually sits around imaging:

Less manual setup and fewer custom scripts

Better control of power and storage

Cleaner datasets with fewer hours of “nothing happened” footage

More repeatable mission design across deployments and platforms

Conclusion

Full ocean depth is not forgiving. The cost of a mistake is high, and you cannot fix it mid-mission. That is why autonomy, not just depth rating, is a core requirement for hadal imaging.

Rayfin Trench is built to help teams plan autonomous imaging missions with no-code scripting, event-based triggers, coordinated payload control, and optional hibernation for longer deployments

Frequently Asked Questions

-

The hadal zone generally refers to ocean depths below about 6,000 meters, primarily in trenches.

-

Because real-time control is often not possible, and power and storage have to be managed carefully over long deployments.

-

It means recording or taking stills only when defined conditions are met, rather than recording continuously.

-

It means building mission logic with a visual interface instead of writing software code.